|

This decryption code is kept secret (or private) so only the person who knows the key can decrypt the message. The other key allows you to decode (or decrypt) the message.

One key tells you how to encrypt (or code) a message and this is "public" so anyone can use it.

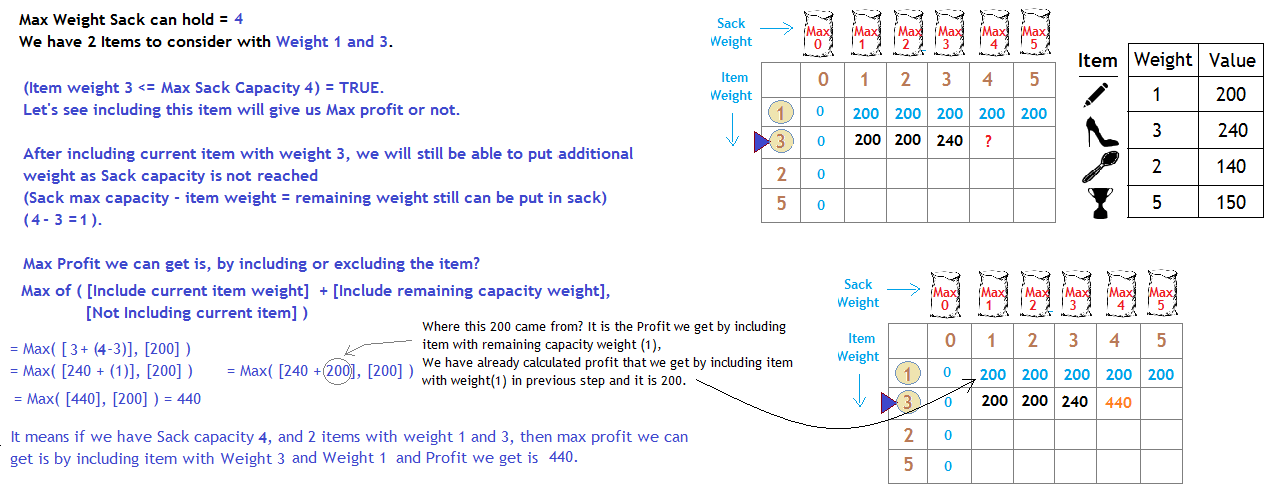

It takes at most n steps and here n is the size of the input (the length of the array).Public-Key cryptography was invented in the 1970s by Whitfield Diffie, Martin Hellman and Ralph Merkle. Now The regular O(n) caseīy contrast, searching an array for a given element runs in polynomial time: O(n). But here, n is the magnitude of the input, not it's size. For a given number n we are checking if it's divided evenly by each integer number in range 2.√n, so the algorithm takes √(n−1) steps. Time complexity grows exponentially in the size of W in binary (or decimal) representation.Īnother simple example that helped me understand the pseudo-polynomial concept is the naive primality testing algorithm. If we increase the capacity to 1024, we would have just 10 bits of the input for W instead of 2, but the complexity has increased by a factor of 512. Our input has only grown by one bit, but the computational complexity has increased twofold. Now we shall increase the knapsack capacity to 4, keeping the rest of the input. Let's say we have an instance with knapsack capacity 2. The computational complexity (i.e how processing is done inside a computer through bits) is only concerned with the size of the inputs, not their magnitudes/values.ĭisregard the value/weight list for a moment. The Knapsack algorithm's run-time is bound not only on the size of the input (n - the number of items) but also on the magnitude of the input (W - the knapsack capacity) O(nW) which is exponential in how it is represented in computer in binary (2^n). We haven't seen that kind of dramatic response to changes in value in other algorithms before this one, which is why it might seem like we're treating Knapsack differently - but that's a genuine analysis of how it responds in a non-polynomial fashion to changes in input size.

Add one bit to W and you double the running time of the algorithm. The trick with the Knapsack algorithm that we discussed is that it's unusually sensitive (relative to other algorithms ) to the magnitude of a particular parameter, W.

The magnitude of the numbers involved always makes a difference, even in the other algorithms like binary sort, once you expand beyond the buffer of safety conventional processors give us "for-free" by handling 4-8 byte batches. (You can imagine that the Big-O notation is hiding a constant factor that divides-out 32 or 64 bits-per-datum, leaving only the number-of-data-points whenever each of our numbers fit in that many bits or less)īut try reworking with other algorithms to act on data sets involving big ints - numbers that require more than 8 bytes to represent - and see what that does to the runtime. Because of the way most processors are built to handle 4-8 byte numbers at a time at no additional cost (relative to numbers than fit in, say, 1 byte), we rarely encounter a change in running time from scaling our numbers up or down within ranges we encounter in real problems - so the dominant factor remains just the sheer quantity of data points, the n or m factors that we're used to. In most of our problems, we're dealing with large lists of numbers which fit comfortably inside standard int/float data types.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed